Generative Art

For a while now I've discussed within my company how future designers will likely focus less on creation and more on curation of ai generated ideas.

So I figured it was time to understand a bit more about how the technology works...

Stable Diffusion

I chose to try out Stable Diffusion. I've seen it produce interesting results, and now that it is open source, I can use any interesting images as I please.

First, some fun random stuff to make sure the software is working!

prompt: "post apocalyptic world"

prompt: "futuristic woman on motorcycle poster"

Then I just started typing random phrases and things that came to mind. I wrote a little script to iterate through the list of items and generate low-iteration (16it) versions of each item.

It was pure, weird, awesomeness.

But the real question was, how do we put this to use for us?

- Could we train a model based on past work, sprinkled in with some inspirational elements relative to an upcoming project, and get anything useful back?

- Could we create a client-facing interace to generate inspirational ideas that they could choose from, based on what we've done in the past with ai injecting some new adjacent ideas?

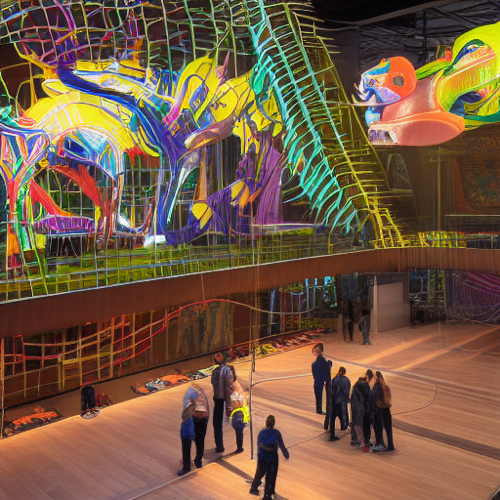

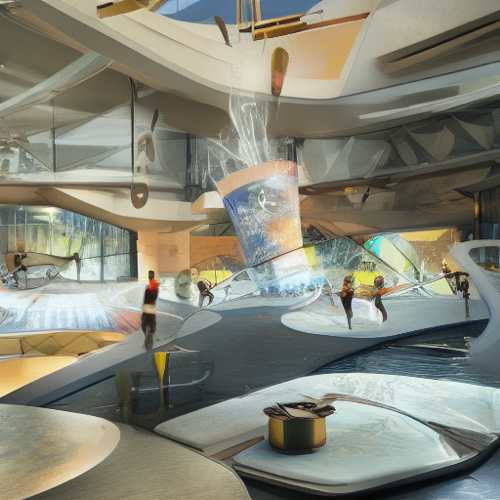

Not sure yet. But for now, here's a fun sampling of the images created...

Notes

- An Intel mac option: https://github.com/bes-dev/stable_diffusion.openvino

- Install using pip as recommended, but put it inside a virtual environment as well!

- Each image takes about 3 minutes to generate locally on my i7 processor.

DeepDream

In early 2021, I made a bunch of weird art using a commercialized version of Google's DeepDream generator.

The goal here was to understand generative art, but also how NFTs work.

My experience with DeepDream was that the output tended towards lots of eyeballs and reptile heads no matter what the original model was.

iteration 1

iteration 4

iteration 7

iteration 10

iteration 20

iteration 30

iteration 40

iteration 50

iteration 60

iteration 70

iteration 80

iteration 90

I didn't play with it that extensively, but basically all my art had lots of eyes and lizard heads after a number of iterations.

I did end up creating an interesting animation and minting an NFT out of it though. So much fun.